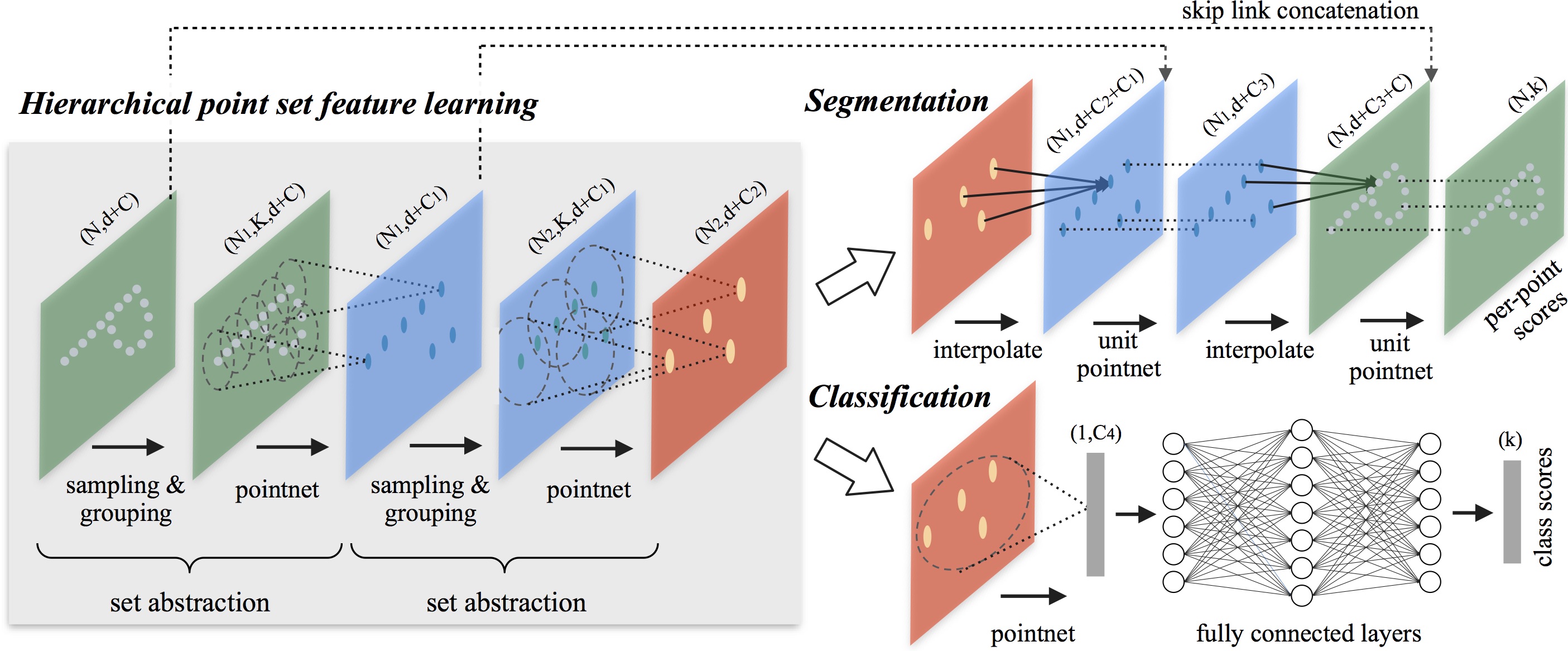

Figure 1. PointNet++ Architecture for Point Set Segmentation and Classification. We introduce a type of novel neural network, named as PointNet++, to process a set of points sampled in a metric space in a hierarchical fashion (2D points in Euclidean space are used for this illustration). The general idea of PointNet++ is simple. We first partition the set of points into overlapping local regions by the distance metric of the underlying space. Similar to CNNs, we extract local features capturing fine geometric structures from small neighborhoods; such local features are further grouped into larger units and processed to produce higher level features. This process is repeated until we obtain the features of the whole point set. |

Few prior works study deep learning on point sets. PointNet by Qi et al. is a pioneer in this direction. However, by design PointNet does not capture local structures induced by the metric space points live in, limiting its ability to recognize fine-grained patterns and generalizability to complex scenes. In this work, we introduce a hierarchical neural network that applies PointNet recursively on a nested partitioning of the input point set. By exploiting metric space distances, our network is able to learn local features with increasing contextual scales. With further observation that point sets are usually sampled with varying densities, which results in greatly decreased performance for networks trained on uniform densities, we propose novel set learning layers to adaptively combine features from multiple scales. Experiments show that our network called PointNet++ is able to learn deep point set features efficiently and robustly. In particular, results significantly better than state-of-the-art have been obtained on challenging benchmarks of 3D point clouds.

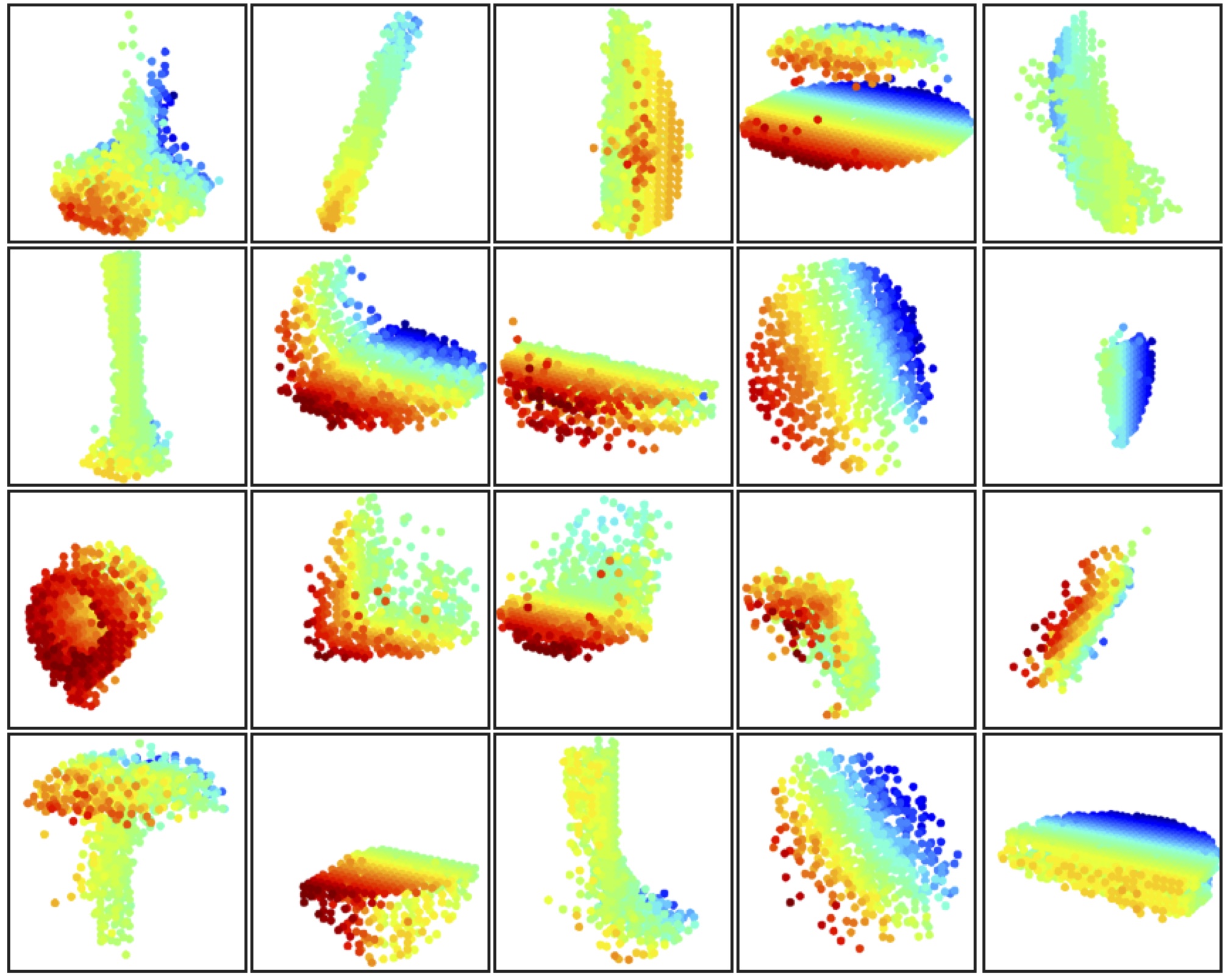

Figure 2. Visualization of Point Cloud Patterns Learned from PointNet++. Patterns learnt from 20 (out of the 1,024) neurons in the first level are shown. We visualize the point cloud patterns learnt by searching for point clouds (in unit sphere) that activate the neurons the most. Since The model is trained for ModelNet40 shape classification which contains mostly furniture, we see clear structures of planes, double planes, lines, corners etc. Color in figure indicates point depth (red is near, blue is far). |